I was never a fan of recipes. Even less so when I heard that I have to apply them by the book. What I found over years was that books rarely, if ever, describe a context that is close enough to mine. This means that specific solutions wouldn’t be applicable in the same way as described in the original source.

This is why I typically look for more abstracted knowledge and treat more context-dependent advice as rather inspiration that a real advice.

From what I see that’s not a common attitude. I am surprised how frequently at conferences I would hear an argument that the sessions weren’t practical enough only because there was no recipe included. This is only a symptom though.

A root cause for that is more general way of thinking and approaching problems. Something that we see over and over again when we’re looking at all sorts of transformations and change programs.

People copy the most visible, obvious, and frequently least important practices.

Jeffrey Pfeffer & Robert Sutton

Our bias toward practices is there not without a reason. After all, we’ve heard success stories. What Toyota were doing to take over the lead in automotive industry. The early successes of companies adopting Agile methods. There were plenty of recipes in the stories. After all that’s what we first see when we are looking at the organizations.

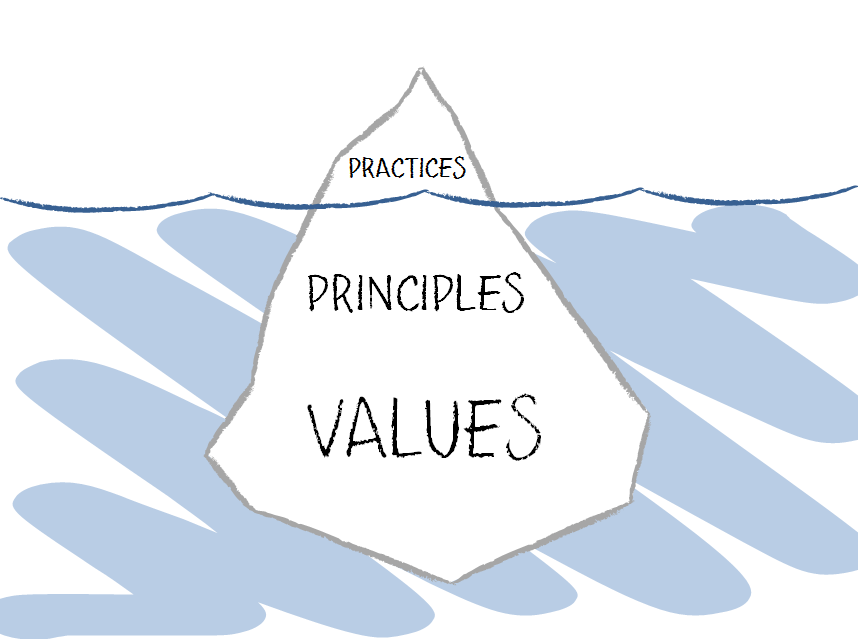

Iceberg

The tricky part is that practices, techniques, tools and methods are just a tip of the iceberg. On one hand this is exactly what we see when we look at the sea. On the other there’s this ten times bigger part that is below the waterline. The underwater part is there and it is exactly what keep the tip above the water.

In other words if we took just the visible tip of the iceberg and put it back to water the result wouldn’t be nearly as impressive.

This metaphor is very relevant to how organizational changes happen. The thing we keep hearing about in experience reports and success stories is just a small part of the whole context. Unless we understand what’s hidden below the waterline copying the visible part doesn’t make any sense.

Principles

A thing beyond any practice is a principle. If we are talking about visualization we are implicitly talking about providing transparency and improving understanding of work too. Providing transparency is not a practice. It is a principle that can be embodied by a whole lot of practices.

The interesting part is that there are principles behind practices but there are also principles that are embraced by an organization. If these two sets aren’t aligned applying a specific practice won’t work.

Let me illustrate that with a story. There was a team of software architects in a company where Kanban was being rolled out across multiple teams. In that specific team there was a huge resistance even at the earliest stages, which is simply visualizing work.

What was happening under the hood was that transparency provided by visualization was a threat for people working on the team. They were simply accomplishing very little. Most of the time they would spend time on meetings, discussions, etc. Transparency was a threat for their sense of safety, thus the resistance.

Without understanding a deeper context though one would wonder what the heck was happening and why a method wouldn’t yield similar results as in another environment.

Values

The part that goes even deeper are values. When talking about values there’s one thing that typically comes to mind, which is all sorts of visions and mission statements, etc. This is where we will find values a company cares about. To be more precise: what an organization claims to care about.

The problem with these is that very commonly there is a huge authenticity gap between the pretense and everyday behaviors of leaders and people in an organization.

One value that would be mentioned pretty much universally is quality. Every single organization cares about high quality, right? Well, so they say, at least.

A good question is what values are expressed by everyday behaviors. If a developer hears that there’s no time to write unit tests and they’re supposed to build ore features or no one really cares whether a build is green or red, what does that tell you about real values of a company?

In fact, the pretense almost doesn’t matter at all. It plays its role only to build up frustration of people who see inauthenticity of the message. The values that matter would be those illustrated by behaviors. In many cases we would realize that it would mean utilization optimization, disrespecting people, lack of transparency, etc.

Again, this is important because we can find values behind practices. If we take Kanban as an example we can use Mike Burrows’ take on Kanban values. Now, an interesting question would be how these values are aligned with values embraced by an organization.

If they are not the impact of introducing the method would either be very limited, or non-existent or even negative. This is true for any method or practice out there.

Mindfulness

The bottom line of that is we need to be mindful in applying practices, tools and methods. It goes really far as not only does it mean initial deep understanding of the tools we use but also understanding of our own organization.

This is against “fake it till you make it” attitude that I frequently see. In fact, in a specific context “making it” may not even be possible and without understanding the lower part of the iceberg we won’t be able to figure out what’s going wrong and why our efforts are futile.

Paying attention to principles and values also enables learning. Without that we will simply copy the same tools we already know, no matter how applicable they are in a specific context. This is by the way what many agile coaches do.

Mindful use of practice leads to learning; mindless use of practice leads to cargo cult.