As a job applicant, would you pay to make sure someone reads your application?

Here’s a sad reality for many people applying for a job:

- Their competitors (i.e., other candidates) use AI tools to mass apply.

- As a result, hiring companies are flooded with applications, and sifting through all of them is impractical.

- What follows is that hiring companies defer to other AI tools to filter out the vast majority of applications (often as much as 95%+).

- The recruitment game becomes one of prompting one AI agent to pass through the filters of another AI agent.

Realities of Job Seekers A.D. 2025

Imagine that there is a job that you really want to get. It doesn’t even matter why. It may be because you know that the company is great, or the job profile matches your dreams perfectly, or you perceive the experience you’d get there as unique, or whatever. You just want in.

But hey, since all those other people are using AI tools to spam the hiring company’s application form, your submission will disappear in that flood.

It’s even worse than that. If you hand-craft your application to show your genuine care for the job, it’s almost certain that you’ll be rejected. After all, your original story will be written to a hiring manager (a human), but it’s never going to get there in the first place. It will be rejected by an automated AI tool (a bot) precisely because it’s non-conformist.

Such a resume doesn’t match the most common patterns. There aren’t many similar examples in the AI model’s training data. It’s not common enough.

If you want your application to get past the AI filter, you kinda have to play the game everyone else does. Optimize for what a bot wants. And it’s impractical to do it by hand. Just hire another AI agent to do it for you.

Except that you’ve defeated the purpose that way. First, you aren’t more likely to get through. Second, even in the case that you do, the hiring manager will see another similar, bland-but-professional resume. You will not stand out.

Most importantly, you will not carry over your care about that job.

Recruitment in the AI Era Is Irrevocably Broken

The story above neatly pictures how broken the recruitment has become. What’s more, there’s no going back.

You can pretend it’s 2020 and send your manually-crafted CV, but you’re going to lose to people auto-submitting thousands of AI-generated resumes. Oh, and said resumes will be automatically tweaked to better match a job description, with no human effort whatsoever.

A resume doesn’t work as a token of information exchanged between two humans (a hiring manager and a candidate) anymore.

The career of a resume is over. At least the one that we know. If anything, a CV becomes a token exchanged between two AI agents, neither of which is programmed by the actual candidate.

No matter how hard we try, there’s no coming back. We can’t make resumes unbroken again. Even if we aspirationally tried to restore the original meaning of a CV, there will always be a rogue player who will exploit that trust by mass-applying with generated stuff. And since that will give them a short-term advantage, others will follow suit.

Winning the Game by Not Playing It Altogether

It’s ironic how both sides of this equation—recruiters and candidates alike—are losing in the new setup. Candidates have it harder to show their care about specific jobs. Companies give up on the best matches because they employ a bot to reject 95% of applicants. And yet, no one can change the rules anymore.

So, is conforming to the new state of things the only option?

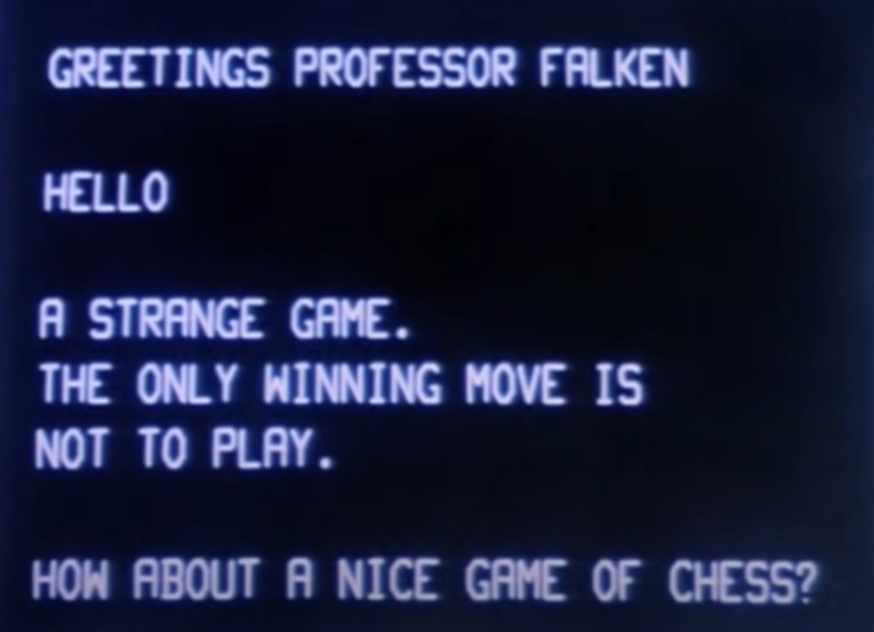

In the classic movie WarGames, the AI, which is trying to “win” the nuclear war, eventually learns that it always ends in mutual assured destruction. The only winning move, thus, is not to play at all.

It’s the same with recruitment. If the current system forces us to mass-produce thousands and thousands of resumes that no one will ever read, we’re just adding noise to the system. The winning move? Not to play.

But wait, if you want to change jobs, how are you supposed not to play the game? If you never apply, you never get that dream job of yours. Or a better one than you have now.

Trust Networks as Antidote to AI Slop

In recruitment, as much as in any other area, we will defer to trust networks to circumvent the noise. The more toxic AI slop is in the feed, the less we trust the feed altogether, and the more we rely on human-to-human connections.

One side of relying on trust networks is that companies increasingly go for employee referrals rather than traditional open recruitment processes. That doesn’t solve the other part of the equation, though. What if I am a candidate and want that specific job?

Do the same. Build a connection with someone at that company. We live in an interconnected world, and there are still places where a genuine message will stand out. They may attend local meetups, be active on LinkedIn, maybe publish a blog or a Substack, or engage in some other professional activities. If you care, you will figure that out. Get to know people first, and only then apply.

Does it seem like a lot of effort? That’s precisely the point. It shows how much you care.

Very recently, we made our first hire in almost two years. We didn’t even open a recruitment process. There was this guy who stayed in contact after we talked a few years back. And then, eventually, it was a good time for him and a good time for us. A win-win.

The point is: he made the effort to reconnect. He made it easy for us to remember.

This could only happen because we’ve built the human connection beforehand. We were two parts of the same trust network.

Would You Pay To Put Your Resume at a Hiring Manager’s Desk?

I admit, relying on trust networks is a lot of effort. And it takes time. Both would make the approach impractical at times. So what if there were a shortcut?

That brings me back to my original question. As a candidate applying for a job, would you pay to skip the AI line? Would you pay to ensure that your application is read by a human?

Note, your resume would still go through regular scrutiny. It’s just you’d know a human would do it, not a black-box AI agent.

There’s an interesting balance here. Make it too cheap, say $0.02, and it changes nothing. People would still be mass-applying all the same, so no one would take that seriously. Make it too expensive, say $200, and it’s probably not a good return on investment for a candidate. After all, no one would hire such a candidate or even rate them any better. A hiring manager would just read and assess the resume as if it passed the AI filters.

What’s in it for a candidate? It’s an open avenue to show genuine care. Since the applicant knows they’re not going through AI, they are free to optimize their application for a human reader. Hell, they actually are encouraged to go the extra mile with their application.

What’s in it for a hiring company? I reckon it wouldn’t make sense for a candidate to pay for mass applying, so they’d do that only for jobs they actually care about. So the hiring company gets a token of care along with a resume. Recruiters can still assess skills the way they do, but before committing any effort in interviews, they clearly know which candidates consider the position a great match.

So, would you pay to guarantee your resume is reviewed by a hiring manager? If so, how much?

Here’s a little experiment that’s in the spirit of the post. This link here is a token of human effort behind the post.

웃https://okhuman.com/CuC1uw