There are those presentations at conferences that stay with us for a long time, even if there seems to be no particular reason for that. And yet they keep coming back for one reason or another. One of such presentations for me was a discussion between Arne Roock and Simon Marcus from Lean Kanban Central Europe years back.

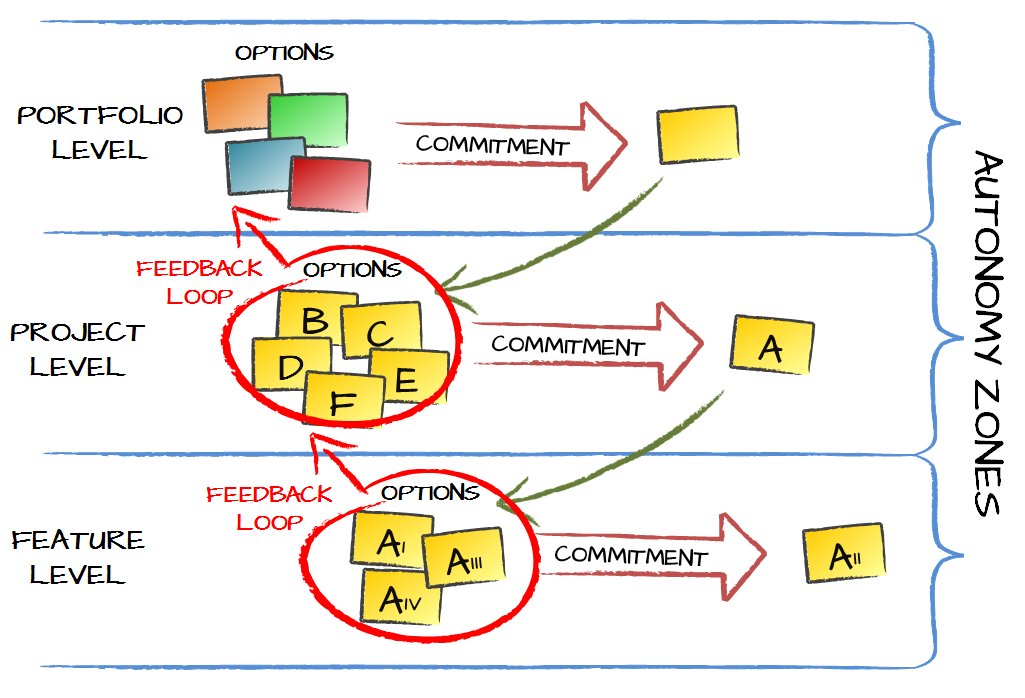

Even though the topic of the discussion was broader there is one context that keeps coming back to me. Autonomy and alignment. A recurring theme was that we can’t enable autonomy unless we have alignment around a strategy, a goal, or whatever is the thing that orchestrates individual efforts.

As Peter Senge in his classic The Fifth Discipline puts it:

To empower people in an unaligned organization can be counterproductive.

It obviously makes sense. I mean, distributing autonomy is all fine but also creates a risk that everyone would pull an organization toward a different direction. Alignment, which goes through understanding of a common goal, helps up to focus on rowing in the same direction.

At the same time, watching the session back then, I couldn’t help but thinking that we at Lunar Logic hadn’t been doing that. We’d been continuously distributing more and more autonomy to everyone and at the same time there hadn’t been any official strategic purpose set for the organization for quite some time.

It the spirit of the discussion between Arne and Simon, who I both respect a lot, that should feel wrong. And yet it didn’t.

I could even remember my earlier discussions with Jabe Bloom. Jabe was pointing how important were techniques he adopted to help people connect their everyday behaviors with strategic goals.

Nonetheless, I still felt like imposing a strategy onto Lunar Logic would be a bad move.

It was months later when I came across the concept of emergent purpose. In its spirit it’s all about understanding organizational culture. It starts with an assumption that everyone at an organization has their individual purpose and it is only natural to pursue that individual purpose. It means that, given no other guidance, everyone would work toward achieving their own personal goals. Some people would have goals similar to others. Some would have very distinct aspirations. Some would have much stronger drive to achieve their own goals than other who would be fine going with the tide.

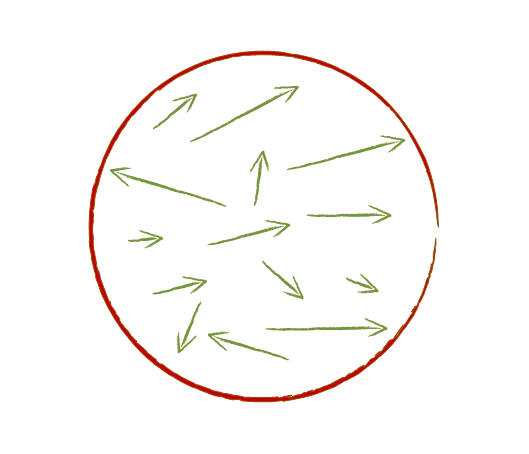

If we tried to visualize that as forces pulling an organization in different directions it might have looked like this.

As a matter of fact it would also mean that there is an aggregated force pulling the organization in some direction. And that aggregated force is exactly an emergent purpose.

By its design we don’t set an emergent purpose. It’s simply the outcome of individual purposes. It also means that for some people in an organization the emergent purpose may be the exact opposite of what they individually want. That’s all fine.

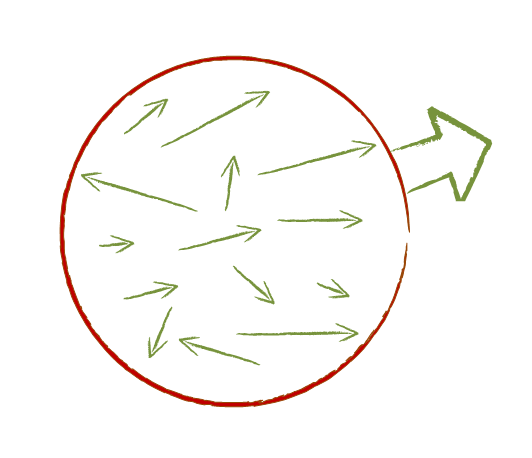

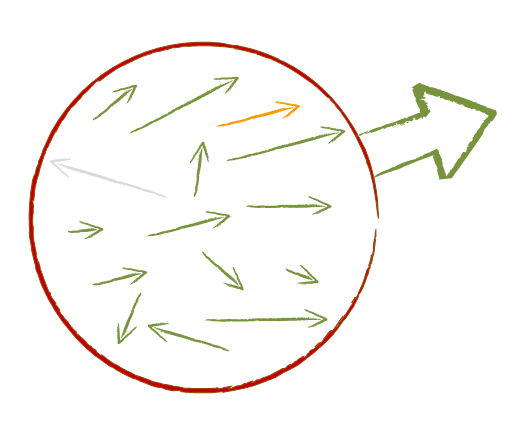

Despite the fact that it’s an emergent property of any organization, we have means to influence the emergent purpose. It happens through hiring. When someone leaves an organization their influence on emergent purpose disappears. At the same the organization hires someone new whose expectations may be better aligned with the emergent purpose.

Through such a change the emergent purpose has been amplified.

There is interesting dynamics in that process. If my own goals are aligned with the purpose of the organization I’m with, it is less likely that I’d leave the organization than if it was otherwise. And corollary to that, my chance of being hired and wanting to join an organization is higher if there is alignment in place.

In other words emergent purpose tends to sustain and even amplify itself, even with no conscious effort from leaders of an organization.

The final, and most important bit about the idea of emergent purpose is that every organization has an emergent purpose. It doesn’t matter whether they have an official strategic purpose or not, or how strong it is, or whether there is alignment between a strategy and an emergent purpose. It’s always there, as the only way to get rid of it would be to make people stop having any ambitions, which is an equivalent of not having any people in an organization, I guess.

That’s exactly where the fun starts. Given that there always is an emergent purpose, we’d be dumb not to listen to it. Now, I don’t say we necessarily need to pursue it actively, yet understanding it is crucial.

The reason is that whatever strategy we choose there will likely be a gap between that strategy and the emergent purpose. The bigger the gap the more people would get disengaged and likely eventually leave. From that perspective there is a price to pay for any strategy and, simply put, the better we understand the emergent purpose the better we are suited to achieve our strategic goal. Also, in simple economic terms, there may be strategies that simply are too costly to pursue.

Ideally, you can do what we did at Lunar Logic. We basically turned our emergent purpose to a company strategy. Instead of imposing a strategy on everyone we listened to each other and figured what’s the most desired path we want to pursue for the time being. That’s how we evolved our aspiration from helping to build products for our customers efficiently to helping the customers to succeed with their products. The latter isn’t focused on the building part nearly as much as the former.

Interestingly enough, out of the potential strategies that we discussed there was one which would make me leave the company eventually. Luckily for me it didn’t end up being our emergent purpose after all.

Of course I understand that few companies would go as far as we did. Even though I think it is an awesome idea I don’t encourage organizations to make that bold move. Nevertheless, knowing what the gap between aspirations of leaders of a company and everyone else is crucial if we look for any reasonable level of sustainability.

Finally, emergent purpose is also one of possible answers for autonomy and alignment issue. As long as we understand what an emergent purpose is we can decide to stick with it or just slightly shape it instead of building alignment externally through officially set strategic goals.

Subscribe RSS feed

Subscribe RSS feed

Recent Comments