Organizational culture is one of these areas that I pay a lot of attention to. Over years I started valuing the role of the culture increasingly more and more. The biggest difficulty though is that organizational culture is a challenging beast to control.

Organizational Culture

organizational culture

the behavior of humans who are part of an organization and the meanings that the people react to their actions

includes the organization values, visions, norms, working language, systems, symbols, beliefs, and habits

If we look at how organizational culture is defined there are two things that are crucial. One thing is that is a culture is formed of behaviors of all people in an organization. The other is that it’s not only about behaviors but also about what drives these behaviors.

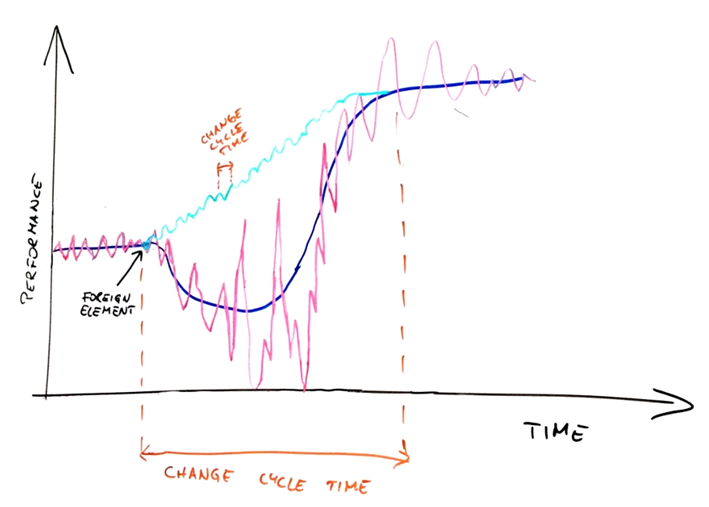

When we look at it we realize that there’s no easy way to mandate a culture change. We can’t simply say: from now on we are a learning organization or that we will value collaboration starting on June the 1st.

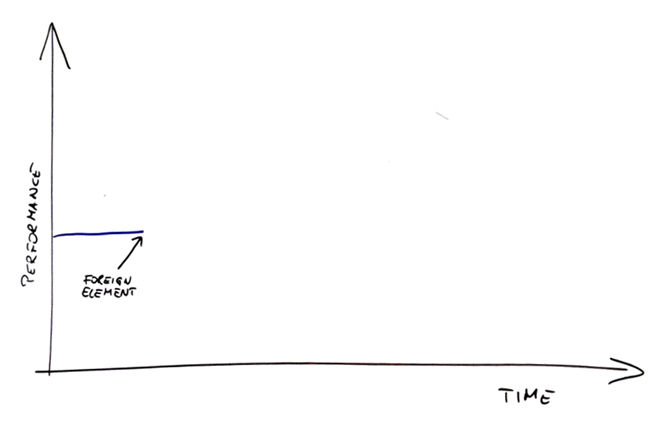

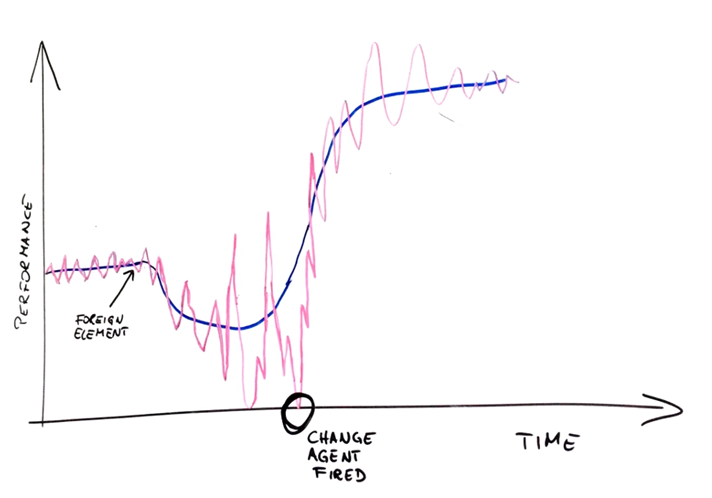

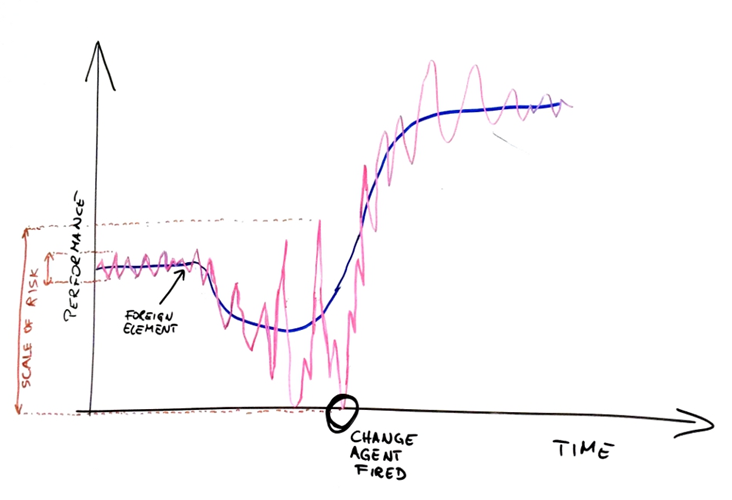

If we want to see a change of a culture we need to see change in behaviors. Bad news though is that change of behaviors can’t really be mandated either. I mean we can install a policeman who will make sure that everyone behaves according to the new policy we issued. What would happen when a policeman is gone? We can safely assume that over time more and more people would retreat back to the old status quo – behaviors they knew and were comfortable with. The change would be temporary and ephemeral.

Identifying Culture

If we want to approach a cultural change we first need to understand the existing culture. What is valued? What principles the organization lives by? How is it reflected in everyday behaviors? Without understanding the starting point changes would be rather random and doomed to fail.

How to identify the culture then? Look at behaviors. Ultimately the culture is a sum of behaviors of people who are a part of the organization.

There is a serious challenge that we’re facing on that front. Not everyone has equal influence over organizational culture. In fact, the higher in the hierarchy someone is the more influence they typically have.

The mechanism is simple. Higher up in the hierarchy I have more positional power and my decisions affect more people. One specific type of decision I make, or at least strongly influence, is who gets promoted in my team. Given all my biases, I will likely promote people who are similar to me, share similar values, and behave in similar way. I perpetuate and strengthen the existing culture.

That’s by the way the rationale behind an advice I frequently share: if you want to figure out what the organizational culture of a company is look at its CEO. The CEO typically has the most positional power and thus their influence over the company is the biggest one. The way they behave will be copied and mimicked across the board.

Of course we need to pay attention to everyday behaviors and not to what is the official claim of the CEO. Very frequently there would be a gap between the two. That’s something I call authenticity gap. An organization claims one thing but everyday behaviors show another. For example they claim to care about customer satisfaction and then they bullshit their customers when it comes to share the project status.

This alone says something about culture too (and not a good thing if you need to ask).

Culture Change

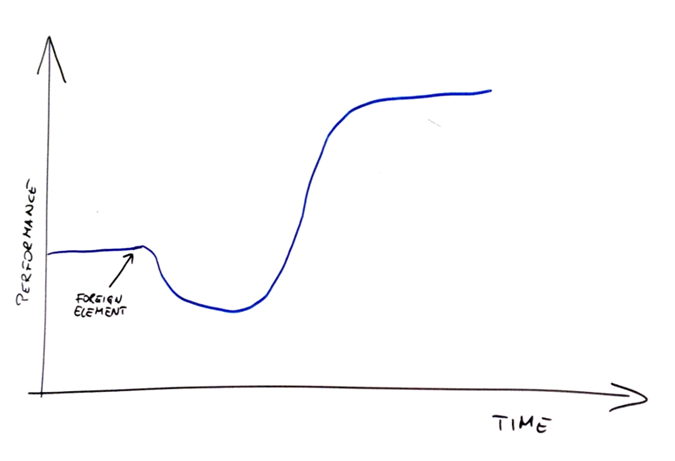

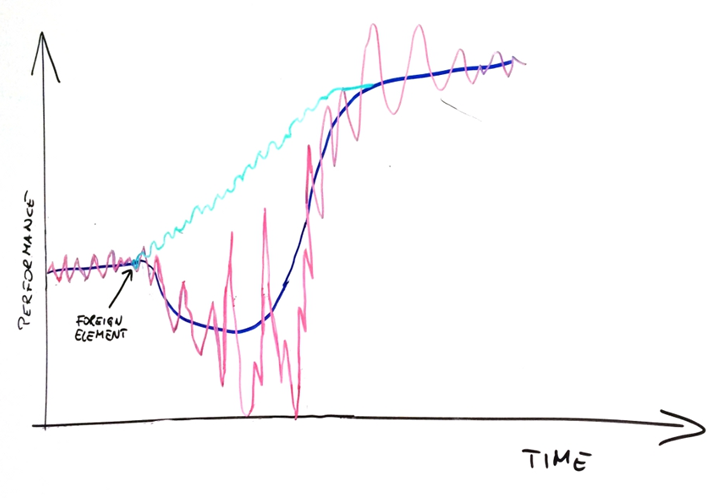

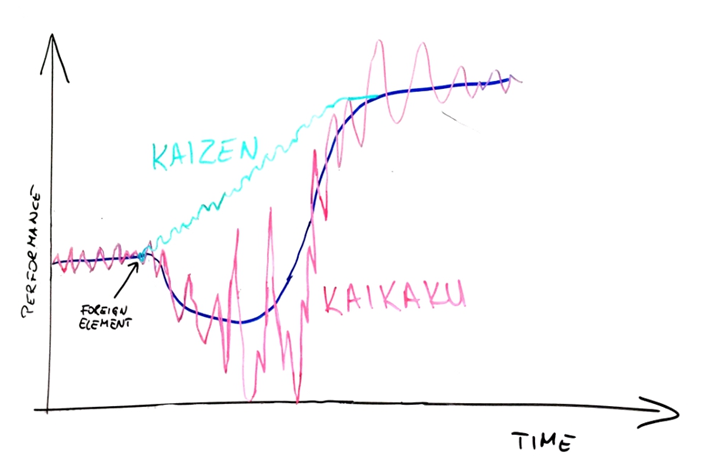

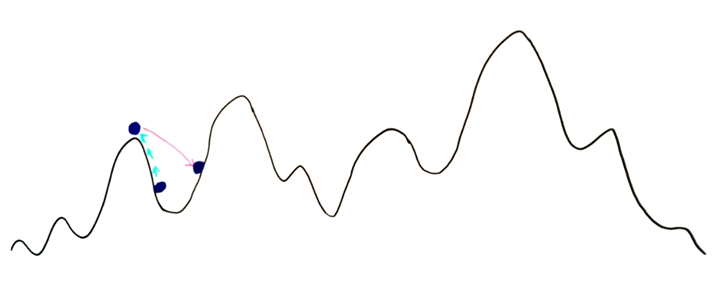

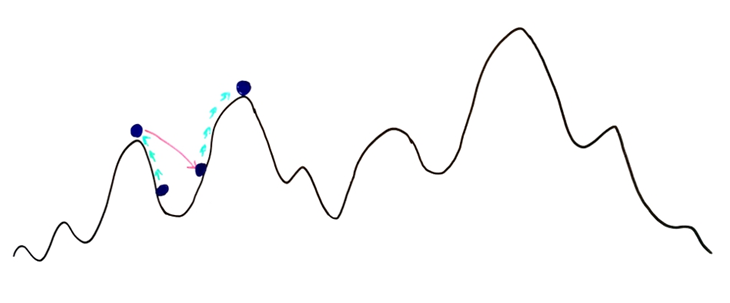

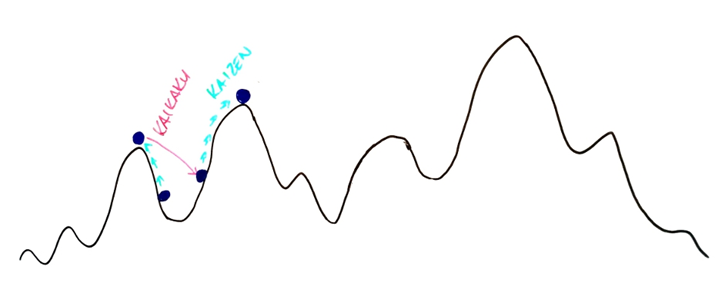

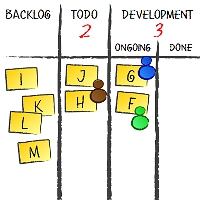

How do we influence the cultural change then? If we can influence the factors that drive behaviors, and thus the culture, resulting changes would influence the culture. It’s even better. When we’re changing organizational constraints we potentially influence change of behaviors across the board and not only in an individual case.

We already established though that not everyone has equal influence on the culture. People at the top, in the long run, will have an upper hand. First, they control who gets promoted and as a consequence who has positional power. Second, that power is needed to change organizational constraints: introduce new rules, change the existing ones, and establish what acceptable and what’s not.

A simple answer how to change organizational culture would be to get top management on board, and help them understand what it takes to influence the culture.

Unfortunately, few have comfort of doing that.

Does it mean that we are doomed? Does it mean that without enlisting top ranks any attempt to change organizational culture will fail? Not necessarily so.

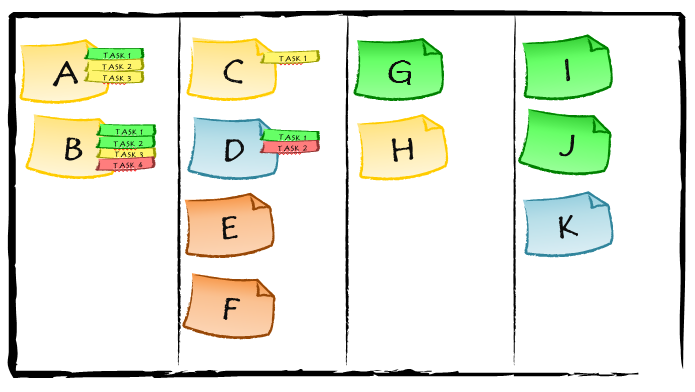

Culture Pockets

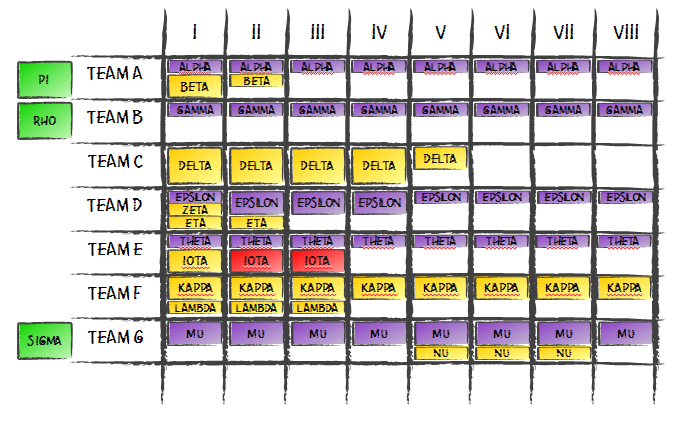

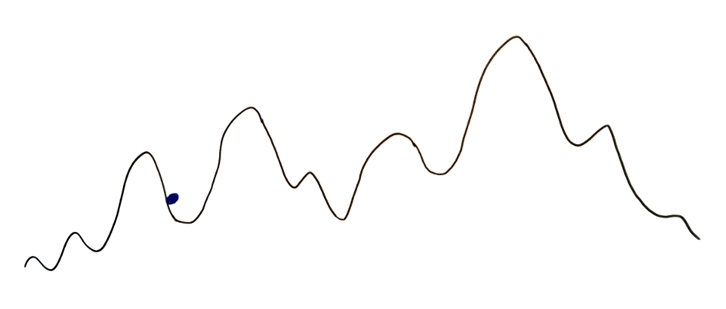

I believe I learned about the concept of culture pockets from Dave Snowden in one of his presentations. The basic idea is that within a bigger, overarching culture we can develop and sustain a different culture.

Another label that is used to describe this concept is a culture bubble.

When we think about this frame, from the top of our heads we can come up with some examples. One would be multinational organizations that have offices all around the world. Because of geography and cultural differences each of the local offices will have at least slightly different organizational culture. You would expect to see a different vibe in an office in India, in Poland, and in USA, even if they are the parts of the same company. Even if that company has pretty uniform culture.

There are examples of introducing culture pockets or culture bubbles when everyone works in the same building too.

One such idea is Lean Startup. One obvious context of applying Lean Startup ideas are startups. Another, and quite a common one, is when big organizations decide to build their product according to Lean Startup principles.

Such a team would operate very differently and very independently from the rest of the organization. Constraints would be different and so would be everyday behaviors. We’d have a culture pocket.

Another similar example is Skunkworks. It’s an idea developed by Lockheed Martin and it boils down to a similar pattern. Lockheed Martin would occasionally run a project in Skunkworks – a very independent team that has a lot of freedom and autonomy. Clearly without all the typical constraints enforced by the company their culture is different than one seen in majority of the company. By the way, a project in this case means designing and building a whole new fighter aircraft or something of similar complexity.

If we go by that analogy, every team can be a culture bubble. It is enough that the constraints within which that team operates are different from those that are standard for the whole organization. This type of culture pocket can go only as far as the team has positional power to redesign their constraints of course. The more positional power there is the bigger the difference of what is happening within and outside of a culture bubble.

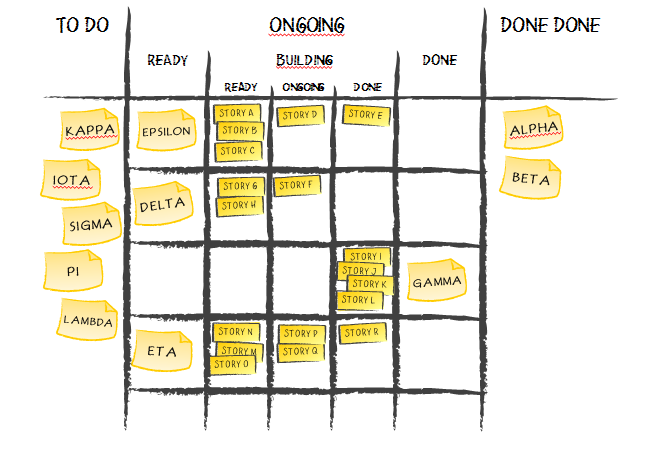

Creating a Culture Bubble

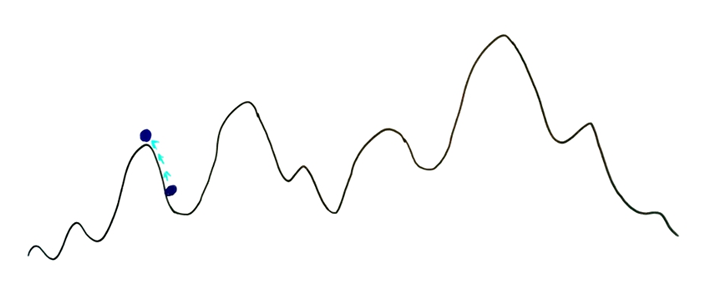

Creating and maintaining a culture pocket is a balancing act. One thing is kicking off the change. That would typically mean someone defining different rules for a part of an organization. It can simply be a team of a few people.

Normally any positional power would be an attribute of a manager. This means that such a change needs to involve that manager. They need to change rules, norms, and expected behaviors. Alternatively they need to let others decide about such stuff, i.e. give up on the power they’ve been assigned.

There is another role for mangers in a setup too. They main responsibility is to sustain the culture bubble. When a culture pocket is established there’s effort needed to keep it going within broader, sometimes even unfriendly, culture of the whole organization.

To give you an example, from a perspective of the whole organization it doesn’t matter at all how decisions are made in a team. What matters that there is no problem with indecisiveness and accountability. The way most organizations understand these concepts would mean that a manger has to be decisive and can be kept accountable. It may still be true even if decisions are made by the whole team using e.g. a decision making process.

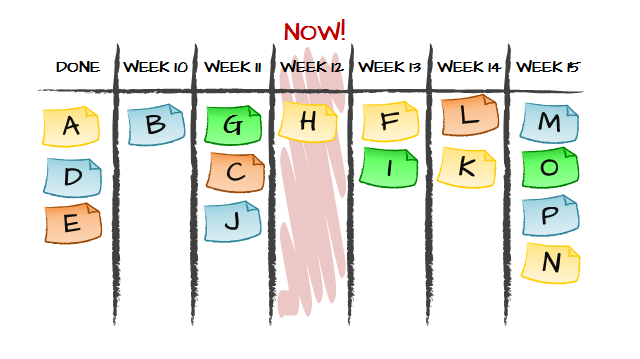

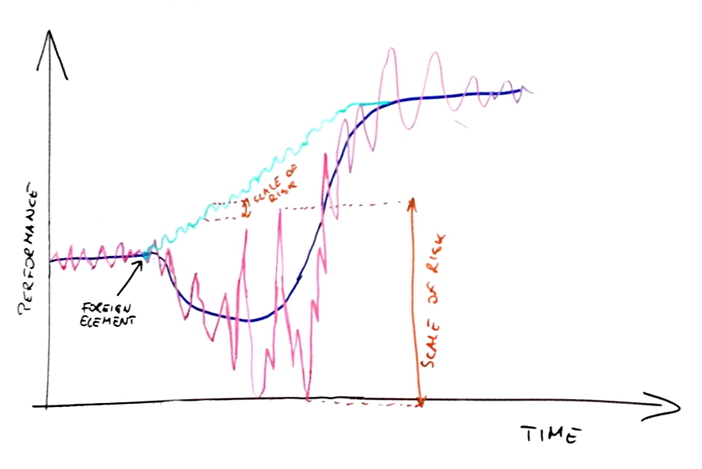

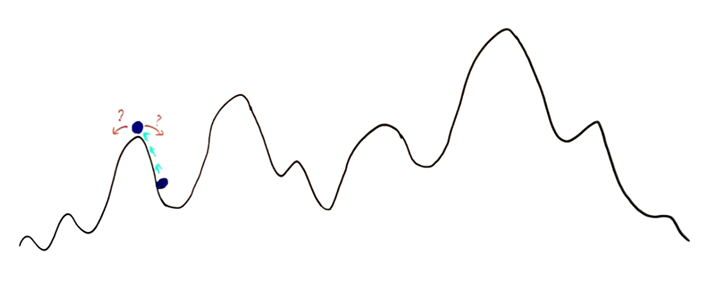

Fragility of Culture Pockets

The biggest risk related to culture pockets is that they are fragile. Typically they base on the fact that some people, who were in power, distributed that power for a better good. It doesn’t mean, however, that when they are replaced with someone else a new person will keep a similar attitude.

A safe thing in such a situation is to adjust to whatever is the overarching culture of the whole organization. It means that a culture bubble is gone as there’s no longer anyone who take cares of translating the two cultures back and forth.

The message I have is twofold. On one hand if we want to see a fundamental and sustainable cultural change we need to get top ranks involved eventually. Without that we won’t address the risk of fragility of culture pockets. On the other hand, a simple fact that in a big organization we can’t simply change the culture of the whole company doesn’t mean that we have no options whatsoever.

From my experience culture pockets, even if fragile and to some point ephemeral, are a perfect vehicle for self-realization of people inside. For people in leadership and management positions they are sometimes the only way to maintain internal integrity.

Finally, sometimes it is the only option if we want to influence the cultural change.

Subscribe RSS feed

Subscribe RSS feed Follow on Twitter

Follow on Twitter Subscribe by email

Subscribe by email

Recent Comments