Late in 2023, at Lunar, we were preparing a recruitment process for software development internships (yup, we somehow hadn’t jumped on the “you don’t need inexperienced developers anymore” bandwagon). However, ChatGPT-generated job applications were already a concern.

Historically, we asked for small code samples as part of job applications. The goal was to filter those who knew the basics from those who just aspired to become developers eventually. Granted, it wasn’t cheat-proof, but that wasn’t the goal.

It was enough to tell the basics:

- Was it more toward a naive solution or more toward the optimal end of scale?

- Were there tests, and if so, what kind of them?

- What about readability?

Sure, you could ask a developer friend to write it down for you, but you’d eventually show a lack of competence at the later stages. Heck, we even had a candidate asking for a solution at a discussion group. But these were fairly rare cases.

Recruitment in the AI Era

So it’s late 2023, and we know the trick won’t work anymore. ChatGPT can generate a reasonable answer to any such challenge. Eventually, we decide against any coding task and simply ask to share a public GitHub repo. Little do we know, we’re way deeper in hiring in the AI era rabbit hole than we could have ever dreamed.

Sure, we understand that people will feed ChatGPT with our job ad and have it generate output. After all, as always, we provide a great deal of context about what we want to see in the applications. That makes LLM’s job easier.

We state explicitly that we seek genuine answers, and we’ll discard those blatantly generated with ChatGPT. Also, no LLM is an expert in who the candidate is, right? No LLM is an expert in me.

We’re a small company. Till that point, our record was around 90 applications for the internships. Typically, it was maybe half of that. This time, we receive almost 600.

Despite all our communication, most of them were generated by ChatGPT.

AI as the First Filter

OK, it’s no surprise. Instead of creating thoughtful and thorough answers to 4-5 questions, each taking at least a couple of paragraphs, now we can just feed an AI model of our choice, and it will produce as much text as anyone needs.

Companies response? Let’s use the same models to tell which resumes we should even read. Otherwise, it’s just too many of them.

And yes, in our case, I read each and every one of those 600 applications. Well, at least the parts. If the first paragraph has “AI-generated” painted all over it, and the question literally asked you not to generate your answers, then my job was done. I didn’t need to continue.

By the way, the next time I will do the same. However, we are oddballs. It’s now the norm for the first filter to be an AI model that decides whether to pass an application on to a human being.

In other words, the candidates generate applications with AI to pass through an AI filter.

Do you see the irony?

Just wait till someone starts putting hidden prompts in their resumes. Oh, wait, someone has definitely tried that already. I mean, if the researchers do that in a much more serious context, applicants trying their luck is an obvious bet.

Hiring Noise

Now, extrapolate that and ask: What does the endgame look like? More and more noise.

Let’s just wait till we have AI agents that automatically apply to jobs on our behalf with no human action needed whatsoever. Oh, who am I fooling? There already are plenty of startups pursuing this path.

The promise is that you will be able to send hundreds of applications in one click. That’s great! You increase your chances! Or do you?

Even if you do, it will only work for a very short time. Then everyone else will start doing the same, and suddenly every hiring company is flooded with tons upon tons of applications.

What will they do? Yup, you guessed it. They’ll pay another AI startup to automate this job away. Most likely, they already have.

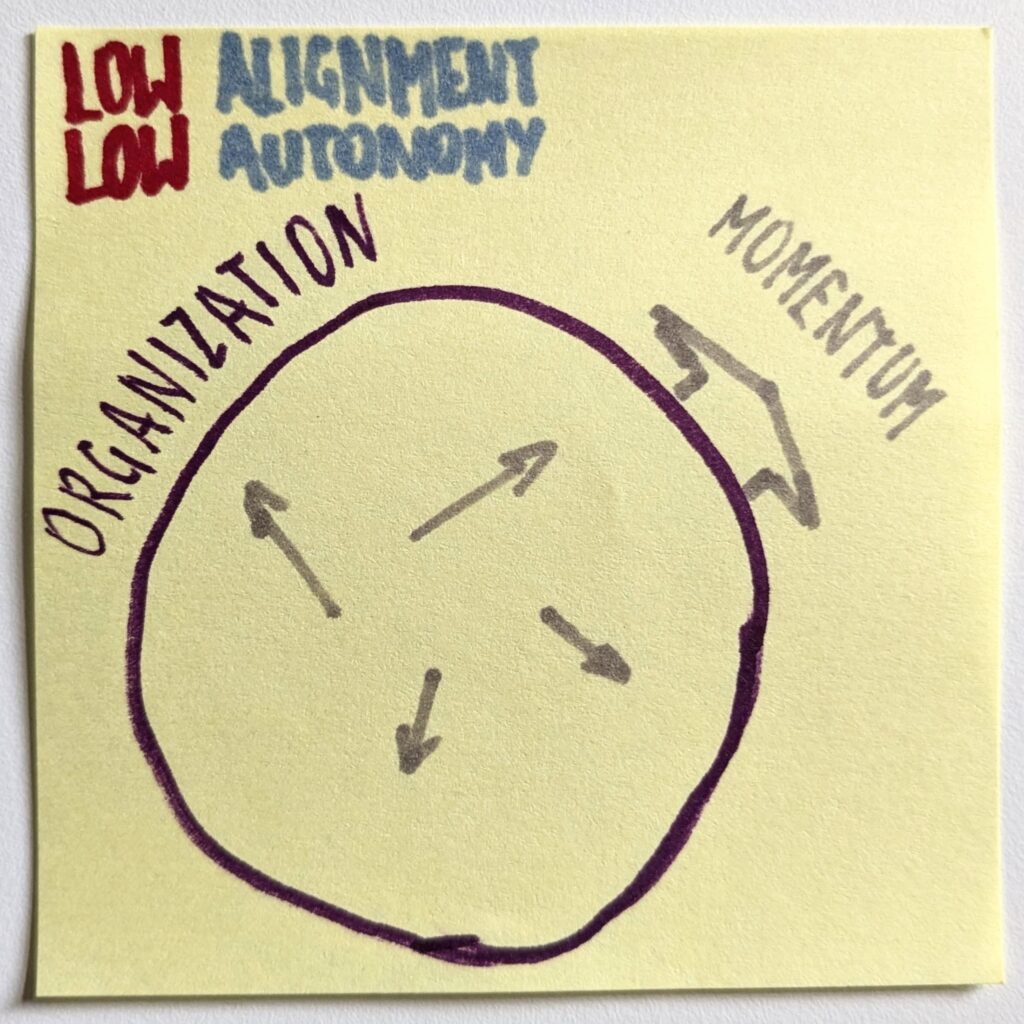

We can easily increase the number of CVs flying over the internet by a factor of 10x or 100x. We still have only 1x of attention from hiring managers.

The AI Era Hiring Game

The early stages of recruitment will increasingly be like two AI models playing chess (while neither having an actual model of what a chess game is). One will try to outplay the other.

An agent playing on a candidate’s behalf will try to write an application that will pass the filters of a hiring company’s agent. The latter, in turn, will attempt to filter out as many applications as possible while still keeping a few relevant ones.

Funnily enough, I’m guessing that what will make you pass through the AI filter will not necessarily be the same things that would make you pass when a human being reads your resume.

LLMs optimize for the most likely output. So “standing out” isn’t necessarily the optimal strategy.

I remember when an applicant drew a comic book for us as their application. It sure caught our attention. I bet an AI model would dismiss it. Oh, and yes, she ended up being a fabulous candidate, and we hired her.

Which doesn’t mean drawing a comic book guarantees you a job at Lunar, of course.

If we were to believe startups operating in the recruitment niche, these days, hiring is just a game of volume. Send and/or process more resumes, and you’ll find your perfect match.

What Is a Perfect Match?

I’ve been recruiting for more than two decades. I’ve made my share of great hires. I’ve made a lot of mistakes, too. Most importantly, though, I’ve made oh, so many good enough hires who have ultimately turned out to be excellent later on.

It doesn’t matter how extensive your hiring procedures are. After a week of close collaboration, you will know about the new hire more than you could have learned throughout the whole recruitment process.

Applying for a job is like submitting an abstract for a conference’s call for proposals. A great talk description doesn’t mean that the session itself will be great. It just means it is a good abstract. And that the person who submitted it is probably good at writing abstracts. It tells little about what kind of speaker they are.

By the same token, a great resume is just that. A great resume.

What we’re doing in recruitment with AI is we set almost the whole limelight on the applications. It becomes a game of writing and analyzing CVs.

Last time I checked, no company was trying to find a person who was great at writing resumes (or more precisely: getting an AI model to generate a resume that another AI model would like).

Renaissance of Good Old Coding Interviews

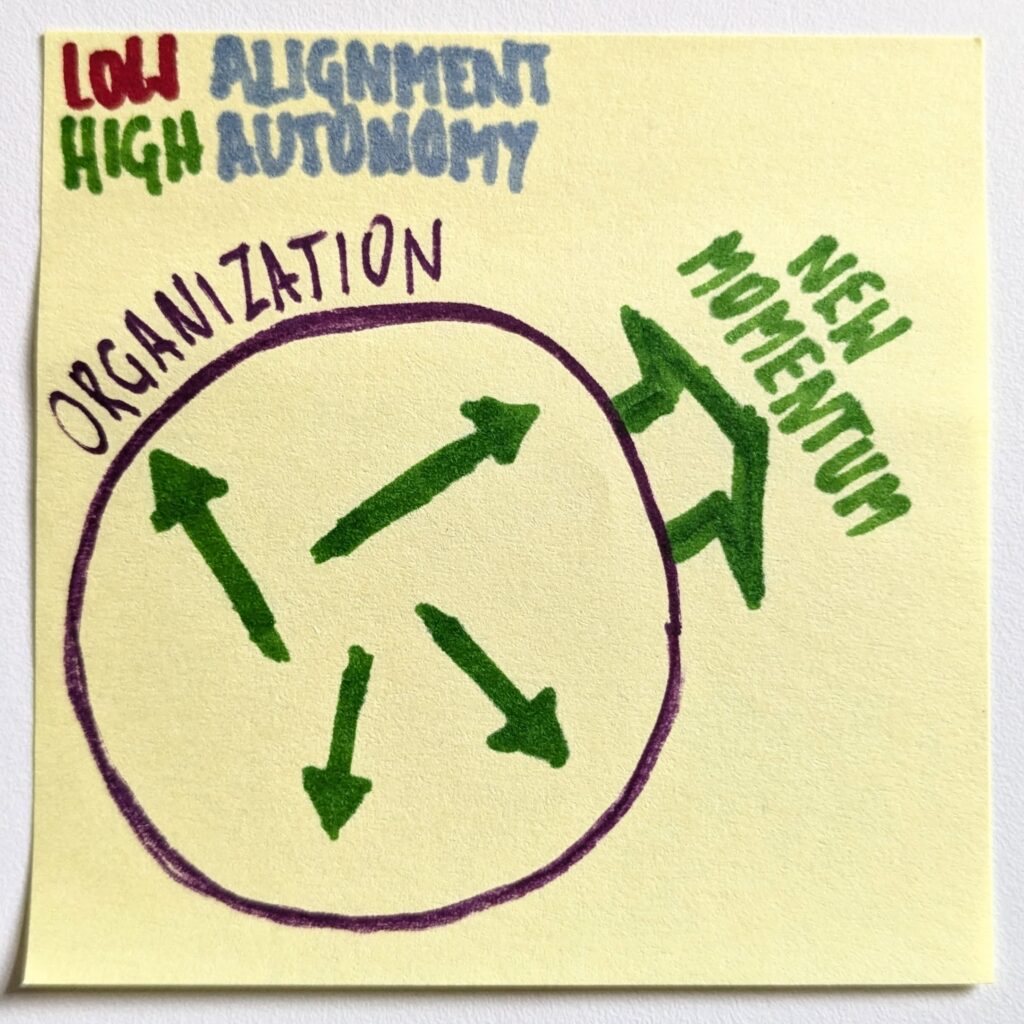

It’s no surprise that physical coding interviews are gaining popularity again. Increasingly, using the AI tooling of choice will be allowed and encouraged during those. Ultimately, that’s how developers work every day.

After all, these interactions were never about knowing the answer. OK, they should never have been about the answer. They should have been about how a candidate thinks, iterates their way to a better solution, and when they deem it good enough. They should have been about working together with another professional. About all those intangibles that we don’t see unless we have an actual experience of working together.

We will see more of those. And there will be more of those happening on-site, not remotely. As a hiring person, I want to understand what part of someone’s train of thought is their creativity and what came as copypasta from ChatGPT (or Claude Code, or whatever).

There’s no shortage of code-generation capabilities. We still don’t have a substitute for judgment, though.

Why Is Hiring Broken?

So far, so good, you could say. We return to proven tools and focus on what really matters.

Yup. That is as long as we’ve cut through the noise. Next time we open internships at Lunar (and we will), I expect more than a thousand applications. Sure, many will be crap, but there will be plenty of work to figure out which will not. The effort needed to navigate the noise grows exponentially.

Under the banner of “we are improving recruitment,” we actually did a disservice to both parties that play the hiring game. Candidates complain that they send lots and lots of resumes, and they don’t even get any responses anymore. Hiring companies have to deal with a snowballing wave of applications, which means that finding a great match is nearly impossible.

That much for good intentions and improvements.

All it took was to remove the effort required to prepare an individual job application. The marginal cost of thinking of and typing those five answers in a form is gone, and thus we can spray our resumes everywhere with one click of a mouse.

Thank you, AI, for breaking the hiring for us.

(And yes, I know it’s all us, not AI.)