When I first stumbled upon Conway’s Law, it was anything but intuitive to me.

Organizations which design systems are constrained to produce designs which are copies of the communication structures of these organizations.

Melvin E. Conway

Why would an organizational structure of a company have anything to do with software architecture? After all, there are whole bodies of knowledge covering high- and low-level concepts of software development. Aren’t these things properly planned and executed?

I mean, sure, in the details, there will always be a mess. However, in a grand scheme of things, the high-level design should be far more controllable than the law suggests.

In retrospect, Conway’s Law is one of those things that, once you’ve seen it, you can’t unsee it.

Conway’s Law and Spotify Model

Whenever Conway’s Law emerges in a discussion, my favorite example is Spotify. I’m a user since the beta. I know several people who worked there in leadership positions. Most of all, Spotify, at some point, was very vocal about their organizational approach, the so-called Spotify Model. It’s like I have enough data to connect the dots.

As popular as it was at the time, if you look at the Spotify Model, it’s hard not to see it as a glorified matrix organization. Yes, there’s more autonomy across the board, but the communication paths? They all scream “Matrix!”

What should we expect from their product design if Conway’s Law is true? My best guess is a set of features interconnected in non-obvious ways, with a common issue of lack of alignment, and a lot of local optimizations. And that’s precisely what we get.

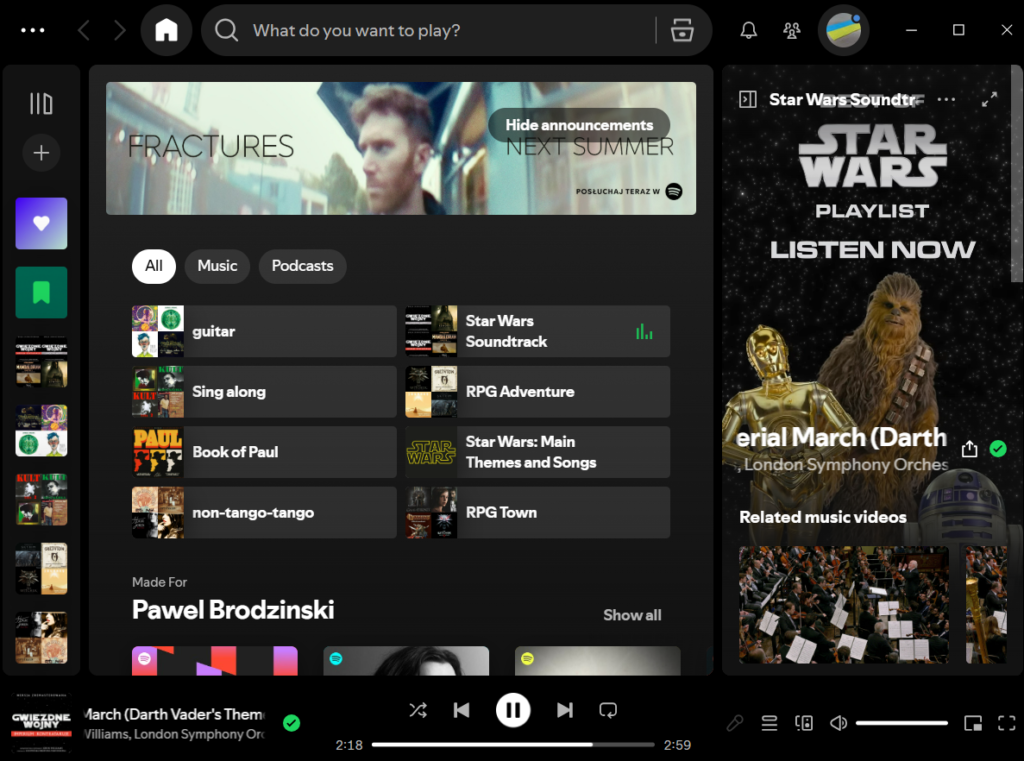

Spotify UX

While Spotify’s codebase is not open, its UX is quite telling. By now, it’s a clutterball of misaligned ideas fighting for your attention.

From a user perspective, it’s fascinating, although not in a good way, how I struggle to find the same options that I use regularly (like, multiple times a week, for years). Managing playlists? Oh, boy. Search? Every other time, it’s in the wrong context. Inconsistencies between mobile and web? Don’t get me started.

But maybe it’s just grumpy me. Fine. I’ve just literally googled “who loves spotify ux?” and among the top results are:

- Who at Spotify designed their interface, and why is that person clinically insane?

- Why is Spotify so popular when it’s UX is pretty bad?

- Who think Spotify App Experience (UX) sucks and why?

Doesn’t sound like a love wave, really. It’s either one: Google reads my mind, or the UX is problematic.

All that comes from a product praised (and copied) for some product innovations, such as Discovery Weekly or Spotify Wrapped. Most definitely, not everything is wrong here. It’s just a matrix of somewhat misaligned ideas, good and bad. That isn’t really a recipe for customer delight.

By the way, did you know that Spotify has a custom seek bar for Star Wars songs that looks like a lightsaber? And you can change the looks of the specific lightsabers, no less. There could hardly be a better illustration of “a matrix of somewhat misaligned ideas, good and bad.”

Communication, Alignment, Autonomy

If you consider Conway’s Law, it’s hard not to see how Spotify UX is a precise map for the Spotify Model. Wherever communication between teams (squads, guilds, chapters, or whatever the hell they call them) was messy and faced multiple conflicting goals, so did the interconnections between features.

To make things harder, Spotify sprinkled high autonomy over their feature teams. So the matrix organization made alignment a challenge, yet decision-making was pushed down the hierarchy nonetheless.

A high-autonomy-limited-alignment setup is not a recipe for effective work. And I say that from experience. At Lunar, we walked that path. The lesson? As much as I’m a huge fan of distributed autonomy, I’d always consider alignment first.

In the late 2010s, Spotify had a few dozen relatively independent feature teams responsible for specific parts of the product. Small wonder that the “mobile playlist” team had different ideas from the “web playlist” team. Communication paths that might have fixed that were watered down by an overly complex organizational model.

By now, the engineering headcount is already in the thousands, and the team count is thus in the hundreds. Just imagine the product mess that the Spotify Model would create. No wonder they largely abandoned it.

OK, but what does it have to do with AI?

AI Product Management

Product people are encouraged almost as strongly as developers to use AI extensively. It comes as a mixed blessing. Early intensive prototyping is a viable path, and it opens up whole new avenues for validating product desirability.

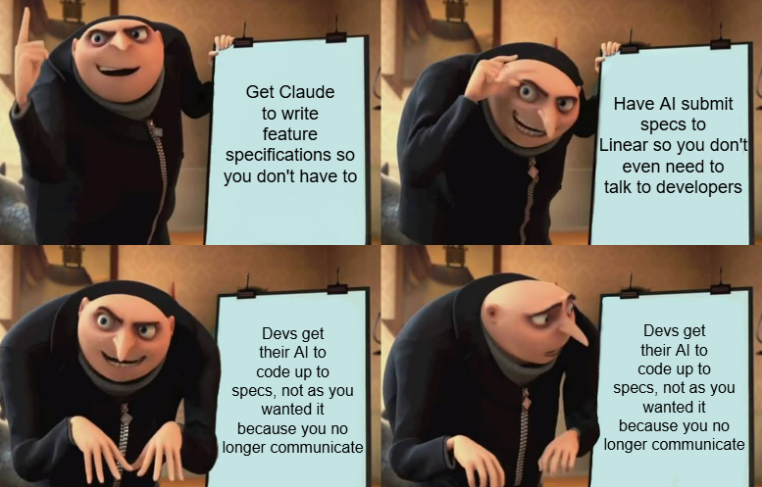

LLMs make it just so easy to create extensive specifications. We can attach all the existing product descriptions as context, let AI do its own research, analyze the codebase, and more. It will produce a nice, detailed description, and the engineers will nail it.

Except we misinterpret the ease of creation for the value of the output.

A sidenote: It’s the same aspiration we had when we tried to model systems with UML diagrams, and it didn’t work either. It’s not about the tools. It’s about the iterative exploratory nature of designing software.

Still, AI can create the same illusion we followed many times before—that we can specify software upfront in detail from the outset. The output looks good. Better than ever, even. And we get it effortlessly. It’s the AI model that does the heavy lifting.

It takes some time to realize that product development doesn’t work like this. It never has. And it has nothing to do with the tools we use to create specs.

AI Kills Communication

In the past, product specifications were brief. Save maybe for some Business Analysts, no one fancied writing long forms describing all the feature details. We relied on just enough context and communication between engineers and product people.

However, now, generating detailed specifications with AI is easy and cheap. Initially, we might even verify whether the output is correct. Eventually, we’ll give up. One, often they’d be good enough. Two, LLMs are great at creating outputs that sound sensible. Three, honestly, read a 4-pager with a feature description and tell me you understood everything.

At some point, and sooner rather than later, a product manager will stop carefully verifying AI output. Soon, the developers will follow. That is, given they read the extensive specs at all in the first place. It quickly evolves into the “you give me the specs, I’ll get them built, no questions asked” kind of scenario.

The key part here is: “no questions asked.” It’s like going all the way back to the 90s, way before Agile happened. We build up to the specs, whether it makes sense or not. The only difference is that we deal with the development so much faster. Does it matter, though, when we build the wrong thing?

Conway’s Law Meets AI Product Management

The most important change happened in between the lines, though. A one-sentence-long feature description was, by definition, full of holes and required the team to discuss. A detailed specification creates the illusion that a feature has been thought through from all angles.

The former invites communication. The latter discourages it.

As we close down communication paths, Conway’s Law kicks in. We’re bound to design architectures that copy organizational communication structures.

Less peer-to-peer communication and less collaborative exploration mean a more fragmented and less coherent architecture. As each individual is treated more and more as an isolated island, so will be the code that individual develops.

The effect will affect both a technical level (think code architecture) and a product level (think UX). Give such a way of working a couple of years, and we’ll start praising Spotify for its exceptional product design in comparison.

AI Is on a Collision Course with Conway’s Law

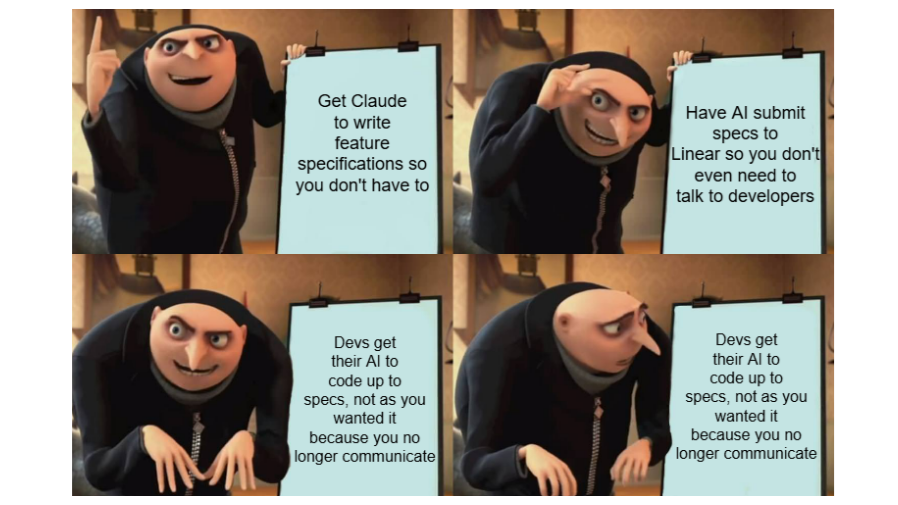

Dev: The last feature is on staging.

PM [checks the feature out]: It doesn’t work as specified! Here’s what should be different.

Dev [checks the code, checks the specs, 2 hours have passed]: Well, actually, it works as specified. Here are the specific parts that prove my point.

PM: Oh, my Claude must have hallucinated that part.

That’s an actual conversation that I heard that inspired this article. When I consider the impact of AI on communication, the paths connecting product and engineering are most affected. Yet, similar effects emerge across the board.

- A single developer may produce more code with AI tools, so they are more independent and, even under time pressure, they don’t need to collaborate with other engineers as often. We reduce the need for communication.

- Coding agents running independently step on each other’s toes way more carelessly, creating merge conflicts and triggering rework (the worst kind of rework, actually). Operators of said agents are thus better off when they isolate their active work areas. Which means less coordination and less communication.

- The asynchronous nature of working with AI agents incentivizes the “throw over the fence” hand-offs. My agent’s output may be ready when I’m already off, but let that not stop you from doing whatever you need to do with it. Again, less human-to-human communication.

- The sheer amount of documentation we can generate on different levels automates away collaborative activities (or parts of them). Code review? Let an agent handle that. Even less communication, perchance?

If Conway’s Law holds, we may be up for a rude awakening. As an industry, we are hyped about all sorts of “my agent talks to your agent” scenarios. It’s easy to see the upside—automating away the mundane, tedious, and routine. It’s hard to see the long-term cost of deteriorating communication paths.

So, we either learn to navigate the new realities of collaboration better, or we accept that the products we use will increasingly be crap. Which one will it be?

These posts are not generated. 웃 https://okhuman.com/nf1GGg

Leave a Reply